Spring Boot is one of the most popular platforms for Java application development, but one of its main drawbacks is startup time. Project Leyden is an open source project that aims to reduce it without giving up the JVM execution model.

In this article, we will briefly look at the differences between GraalVM and Project Leyden, and then move to the practical part to understand how to use Project Leyden to improve the startup time of a Spring Boot application that integrates AWS services.

JVM metrics

Startup Time

It is the time required to complete the first task of the application. It includes class loading,

linking, and the execution of static initialization blocks (

Time to Peak

Java does not reach maximum speed instantly. Peak Performance is the highest level of throughput

that the app can sustain. "Time to Peak" is the time required for most critical code to be compiled in Tier 4,

the highest optimization level of the C2 compiler.

Speculation and Deoptimization

This is the real "magic" of the JVM: the JIT compiler makes bets (speculations). For example, if it sees that

an interface has only one implementation during profiling, it compiles the code assuming that it will always be so.

If a new class is later loaded and breaks that assumption, the JVM performs a deoptimization: it discards the optimized code and returns

to the interpreter to recalculate the strategy.

Standard behavior of a JVM application

When we start a Java application, a multi-stage process called Tiered Compilation begins:

T0 - Interpreter (Slow): The JVM reads Java bytecode instruction by instruction and translates it "on the fly" into CPU instructions. This is the slowest phase, but it allows the app to start immediately without waiting for compilation.

T1-T3 - C1 JIT (Fast): As soon as a method becomes "hot", the C1 compiler transforms it into native code with lightweight optimizations. While executing this code, the JVM also observes which methods are called most often and which data types flow through the code: these observations are called "profiles" and will be used in the next step.

T4 - C2 JIT (Maximum speed): When code is heavily used, the C2 compiler takes over. It uses the profiling data collected by C1 to apply aggressive optimizations (such as inlining or loop unrolling).

This process is not one-way: the JVM can return to previous stages if its assumptions turn out to be wrong (speculation and deoptimization).

AOT with GraalVM

GraalVM is a technology that allows a Java application to be compiled into a native ahead-of-time (AOT) executable, removing the need for a JVM at runtime and drastically reducing startup times.

The core of this technology is Native Image: instead of compiling code "Just-In-Time" while the app is running, GraalVM performs all the heavy work during the build phase:

- Scanning: GraalVM analyzes all application code, dependencies, and JDK code itself.

- Reachability analysis: It identifies only the code that is actually used and discards everything else.

- Result: A platform-specific executable is generated, which does not need an external JVM to run.

The philosophy behind this approach is the "Closed World Assumption": all reachable code must be known at compile time. This means that all classes, methods, and resources used by the application must be specified explicitly, otherwise they might not be included in the native image, causing runtime errors.

As a result, dynamic features such as reflection, dynamic proxies, and dynamic class loading do not work

automatically and require explicit configuration (for example through the reflection-config.json file). This

is a concrete limitation for many Java applications that make heavy use of them, especially those based on

Spring Boot.

Finally, moving all the work to build time leads to significantly longer compilation times than the JIT approach. These trade-offs are acceptable in contexts where instant startup and minimal footprint are top priorities, such as microservices and serverless functions.

AOT with Project Leyden

The problem with the standard JVM is that all the work of loading, profiling, and compilation starts again from scratch at every restart. Unlike GraalVM, which solves this problem by removing the JVM at runtime, Project Leyden takes a different approach: optimize the JVM itself while preserving the dynamism that makes it powerful. If GraalVM is based on the "Closed World Assumption", Project Leyden instead follows an Open World approach: the application still runs inside a full JVM, preserving capabilities such as dynamic class loading, reflection, and a compatibility profile much closer to traditional Java.

To do that, Leyden introduces the concept of a Training Run: a preliminary execution of the application in which the JVM collects profiling data and compiles critical code. The result is saved into an AOT Cache: an archive that contains preloaded classes, methods already compiled into native code, and a pre-initialized heap state. At each subsequent startup, the JVM reads directly from this cache instead of starting over from scratch. The process is divided into three phases:

- Shift computation: Profiling and JIT compilation are performed during the Training Run, instead of at every startup.

- Capture state: Already compiled code and already linked classes are stored in the AOT Cache.

- Lightning-fast deployment: At restart (Deployment Run), the app loads optimized code directly from the AOT Cache, drastically reducing Time to Peak.

We can think of the standard JVM as an athlete who has to warm up every morning; Leyden allows that athlete to "save" yesterday's warm-up and start running at full speed right away.

Project Leyden in practice

To test Project Leyden with a Spring Boot application, we will use one of my GitHub projects (https://github.com/vincenzo-racca/localstack), in which Spring Boot integrates with AWS services such as SQS and DynamoDB.

The Spring Boot version used is 3.5.13; the machine used for the tests is an Ubuntu Server 25 virtual machine with 1 vCPU and 4 GB of RAM.

For each test, the application's container image was generated with the command:

./mvnw clean spring-boot:build-image

Then docker compose up -d was executed to start the Localstack containers (AWS services running locally) and

the containerized Spring Boot application.

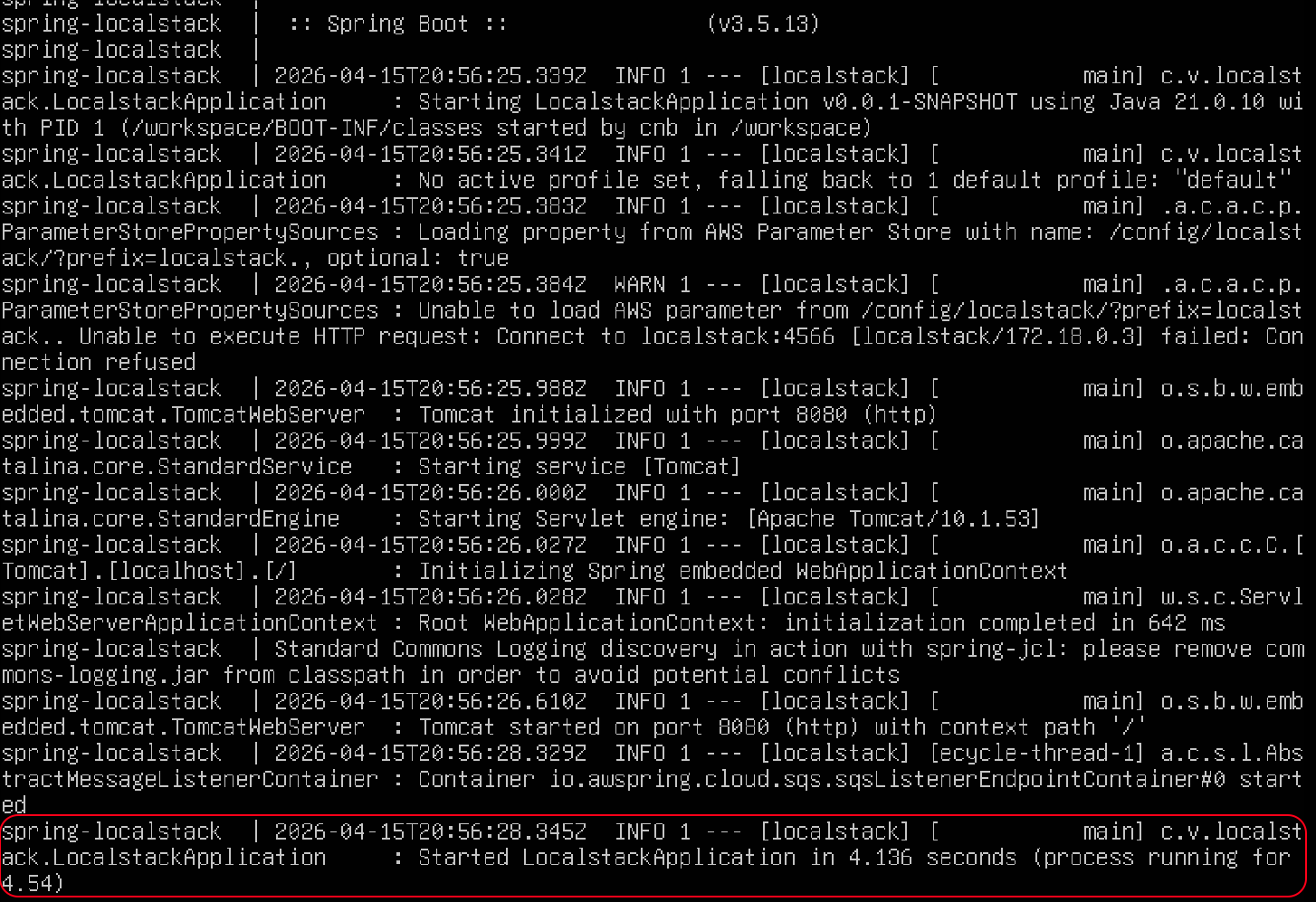

First test: standard JVM with Java 21

Application started in 4.136 seconds:

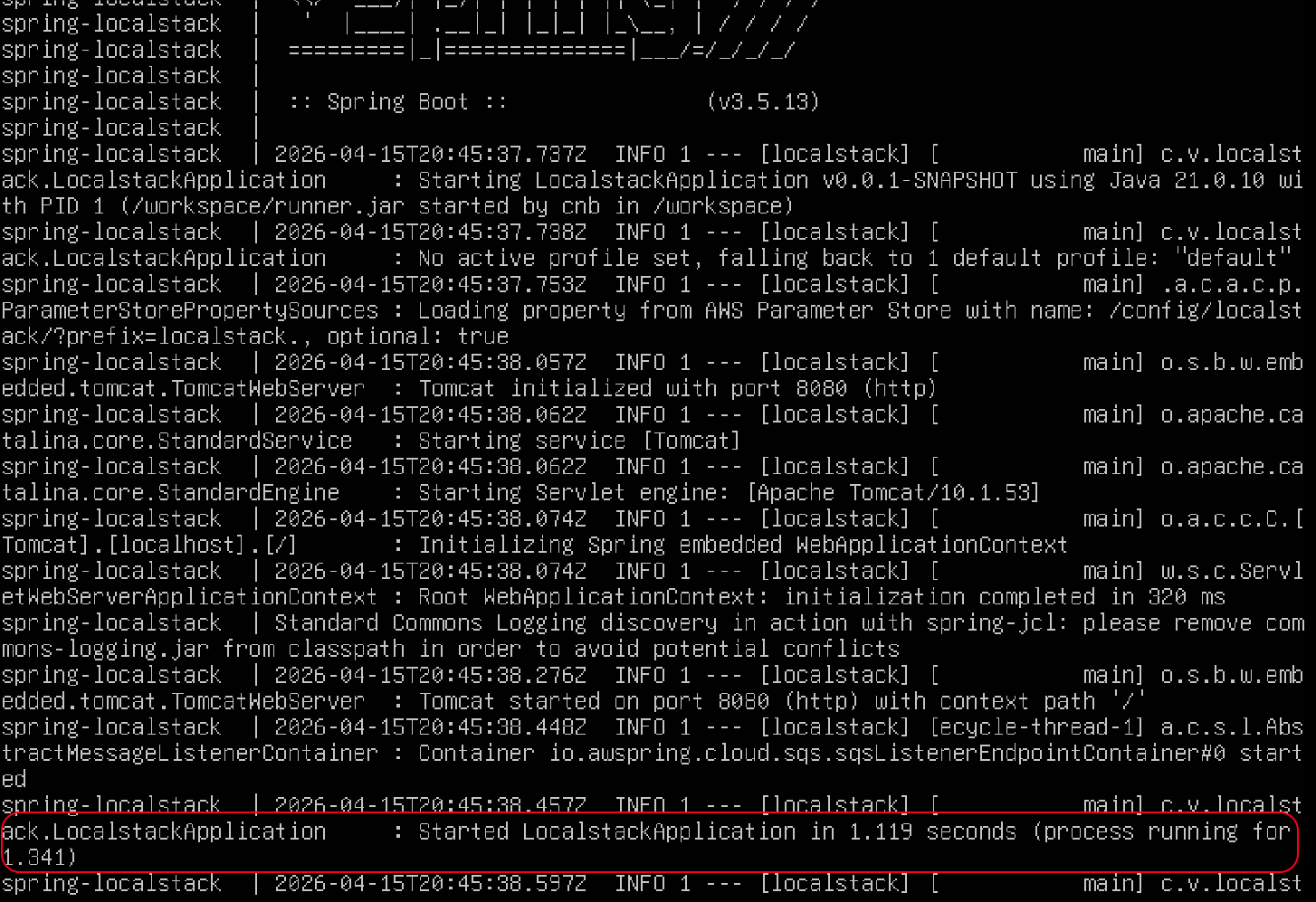

Second test: CDS with Java 21

To enable CDS, just add this configuration to pom.xml:

<plugin>

<groupId>org.springframework.boot</groupId>

<artifactId>spring-boot-maven-plugin</artifactId>

<configuration>

<image>

<env>

<BP_JVM_CDS_ENABLED>true</BP_JVM_CDS_ENABLED>

</env>

</image>

</configuration>

</plugin>

In addition, since image generation automatically includes the training phase,

it is essential that the app can start successfully in a containerized environment; for this reason the optional: prefix was added to the property in application.properties:

spring.config.import=optional:aws-parameterstore:/config/localstack/?prefix=localstack.

Application started in 1.119 seconds:

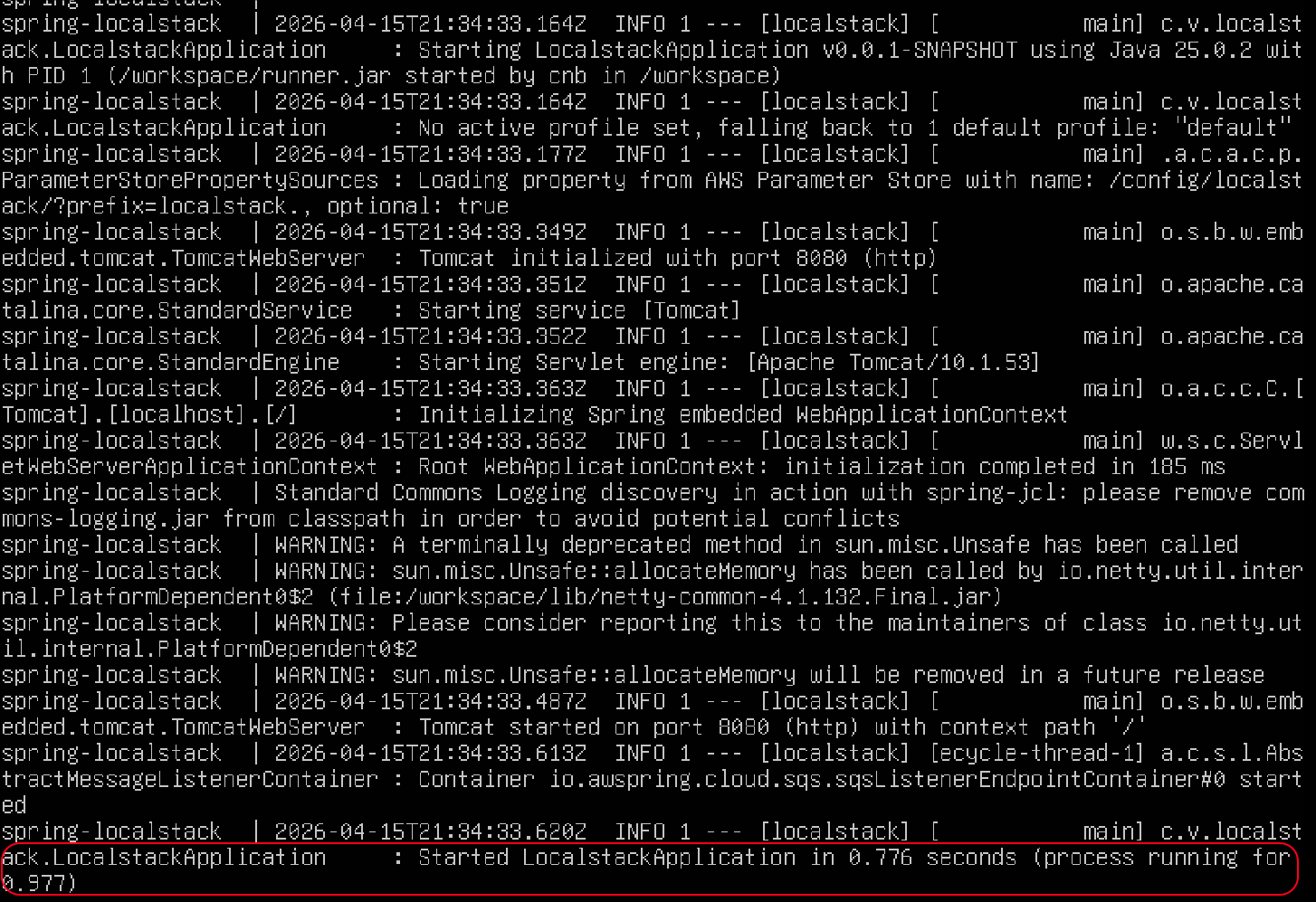

Third test: Project Leyden with Java 25

To benefit from Project Leyden, Java must be upgraded to version 24 or later (in this case I used 25, the current LTS). Below are the benefits obtained with Project Leyden:

| Feature | Java 21 + CDS | Java 24+ + Leyden |

|---|---|---|

| Preloaded classes | ✅ | ✅ |

| Saved JIT code | ❌ | ✅ |

| Pre-initialized heap | ❌ | ✅ |

| Reduced startup time | ✅ | ✅ |

| Reduced time to peak | ❌ | ✅ |

Application started in 0.776 seconds:

Conclusions

In the tests shown in this article, the first major gain is already visible with CDS: startup time goes from 4.136 seconds with the standard JVM down to 1.119 seconds on Java 21. With Project Leyden on Java 25, startup time drops further to 0.776 seconds, delivering a very concrete improvement without rewriting the application and without giving up the typical dynamism of the JVM.

In practice, GraalVM remains the more aggressive choice when the main goal is minimizing startup time and footprint, but Project Leyden looks like the more pragmatic option for many Spring Boot applications: zero code changes, natural integration with the Java runtime, and benefits both in startup time and in time to peak. It is still an evolving technology, but these results already show clearly that it is worth starting to experiment with it.

References

- https://openjdk.org/projects/leyden/

- https://openjdk.org/projects/leyden/slides/leyden-heidinga-devnexus-2024-03.pdf

- https://openjdk.org/projects/leyden/slides/leyden-jvmls-2023-08-08.pdf

More articles on Spring: Spring.

My Spring Boot 3 API Mastery book on Amazon: https://amzn.to/4bU4BNS